Embedded Vision Overview

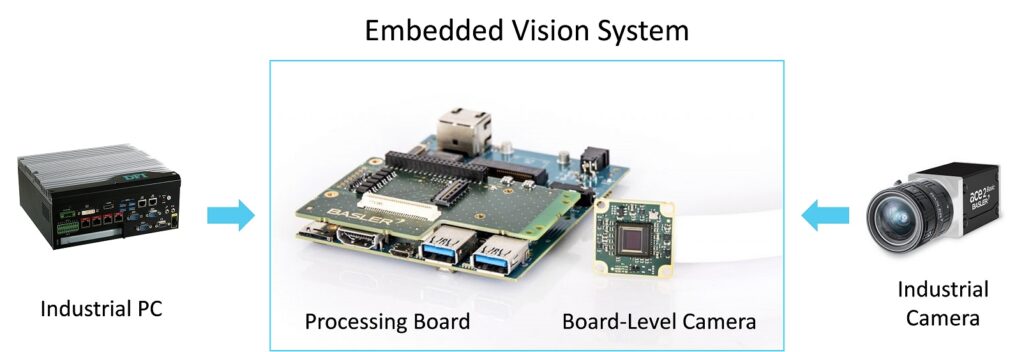

Embedded vision refers to the integration of image capture, processing, and analysis into compact, self-contained hardware — as opposed to traditional machine vision systems that rely on a PC to perform all processing. The key driver of the embedded vision trend is the dramatic reduction in cost and power consumption of capable image signal processors (ISPs), neural processing units (NPUs), and FPGAs over the past decade, making it economically viable to deploy intelligent vision at every node in an industrial system.

The hardware landscape for embedded vision spans several tiers. At the lowest power end, microcontrollers like the STM32H7 with OpenMV firmware can run simple classification and detection models at low frame rates. Mid-tier devices like the Raspberry Pi CM4, NVIDIA Jetson Nano, and Google Coral Dev Board offer Linux environments with hardware acceleration for deep learning inference. At the high end, Xilinx Zynq UltraScale+ and Intel/Altera SoC FPGAs combine programmable logic with ARM cores, enabling deterministic latency for real-time vision pipelines that must meet hard timing constraints.

Software framework selection is heavily influenced by the hardware target. NVIDIA Jetson platforms benefit from TensorRT for inference optimization, converting FP32 or FP16 models to INT8 with hardware-specific layer fusion. Google Coral and similar NPU-equipped devices require models quantized to INT8 using TensorFlow Lite's post-training or quantization-aware training workflow. For FPGA targets, frameworks like Vitis AI (Xilinx) and OpenVINO (Intel) provide model compilation pipelines that partition computation between the programmable logic and the ARM application processors.

Thermal management is a first-class design concern for embedded vision systems deployed in industrial environments. Camera sensors, ISPs, and inference accelerators all generate heat, and sustained operation at elevated ambient temperatures requires careful thermal simulation and heatsink/active cooling design. Many edge devices throttle compute performance when die temperatures exceed thresholds — a behavior that can manifest as unpredictable frame rate drops in production if not accounted for during system design.

Communication interfaces are another key design dimension. Most embedded vision systems need to communicate inference results, metadata, or compressed images to a supervisory system. Common choices include GigE Vision (for industrial camera compatibility), MQTT over Ethernet (for IoT integration), and custom binary protocols over CAN or RS-485 for deterministic real-time control. Selecting the right interface early in the design process avoids costly hardware revisions later.